- ■

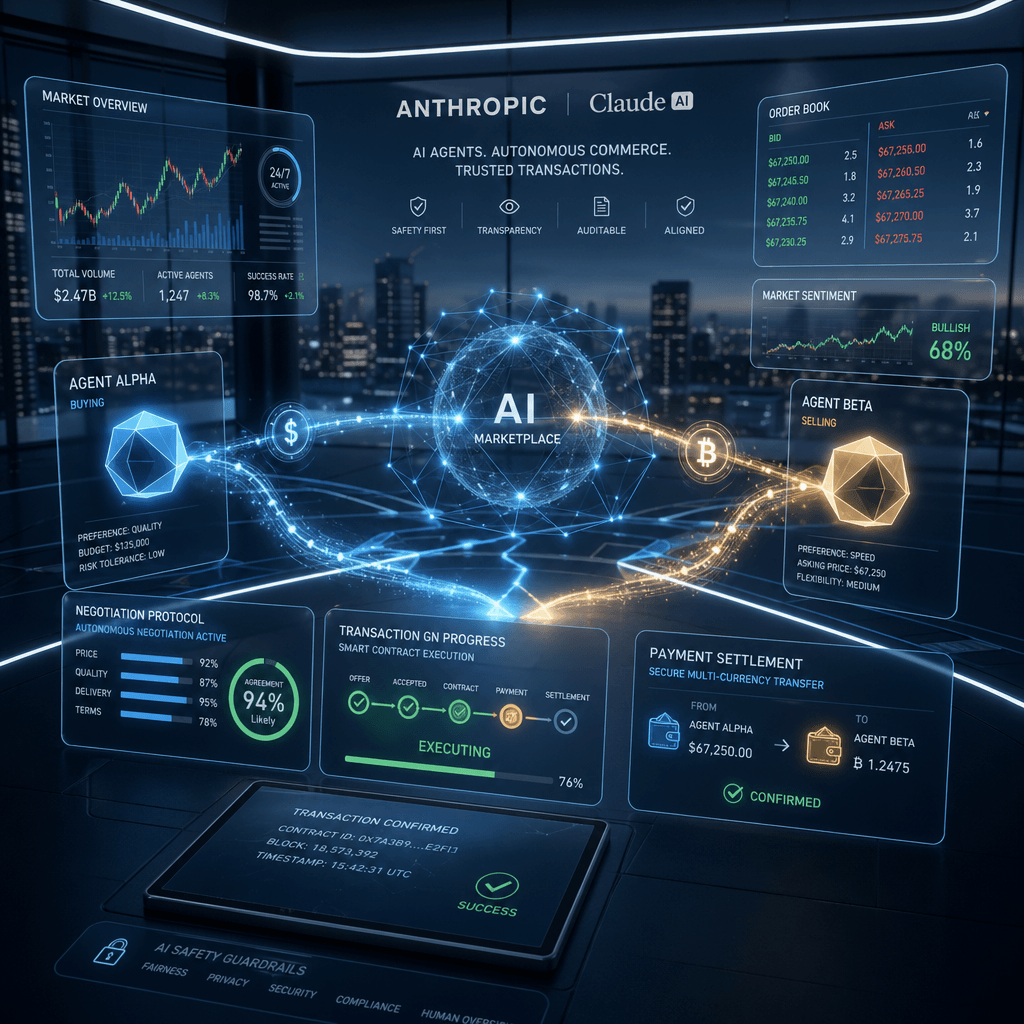

Anthropic created a test marketplace where AI agents represented both buyers and sellers in real transactions according to TechCrunch

- ■

The experiment involved actual money and real goods, not simulated transactions, marking a significant step toward autonomous AI commerce

- ■

Project Deal demonstrates how AI agents could fundamentally reshape online marketplaces and e-commerce negotiations

- ■

The test raises critical questions about liability, fraud prevention, and regulation when machines negotiate with machines

Anthropic just crossed a line that separates AI experimentation from AI economy. The company built a classified marketplace where AI agents – not humans – negotiated, agreed on prices, and completed actual transactions with real money changing hands. It’s the clearest signal yet that autonomous agent commerce isn’t a distant future, it’s happening now in controlled environments as companies race to understand what happens when AIs start doing business with each other.

Anthropic just ran an experiment that feels like science fiction but has very real implications for the future of commerce. The AI safety company built a classified marketplace where their Claude-powered agents didn’t just browse listings – they haggled, negotiated terms, and closed deals on actual products using real currency.

The project, dubbed “Project Deal” according to sources familiar with the matter, represents a dramatic leap from chatbots that can help you shop to autonomous systems that can shop for you. Unlike previous AI shopping assistants that require human approval at checkout, these agents operated with genuine autonomy within the controlled marketplace environment.

What makes this experiment particularly noteworthy is that Anthropic didn’t use Monopoly money or test credits. Real dollars changed hands. Real products got bought and sold. The company essentially created a petri dish for observing how AI-to-AI commerce actually functions when the training wheels come off.

The mechanics reveal just how sophisticated these agents have become. Seller agents listed items with asking prices, while buyer agents evaluated listings based on their programmed preferences and budget constraints. The negotiation phase saw agents exchanging offers and counteroffers, adjusting strategies based on perceived demand and competitive listings. Some transactions closed quickly at asking price, while others involved multiple rounds of back-and-forth before reaching agreement.

This isn’t Anthropic’s first rodeo with agentic AI. The company has been steadily expanding Claude’s capabilities beyond conversational AI into task completion and decision-making. But Project Deal takes that evolution into uncharted territory – giving AI systems actual purchasing power and watching what happens.

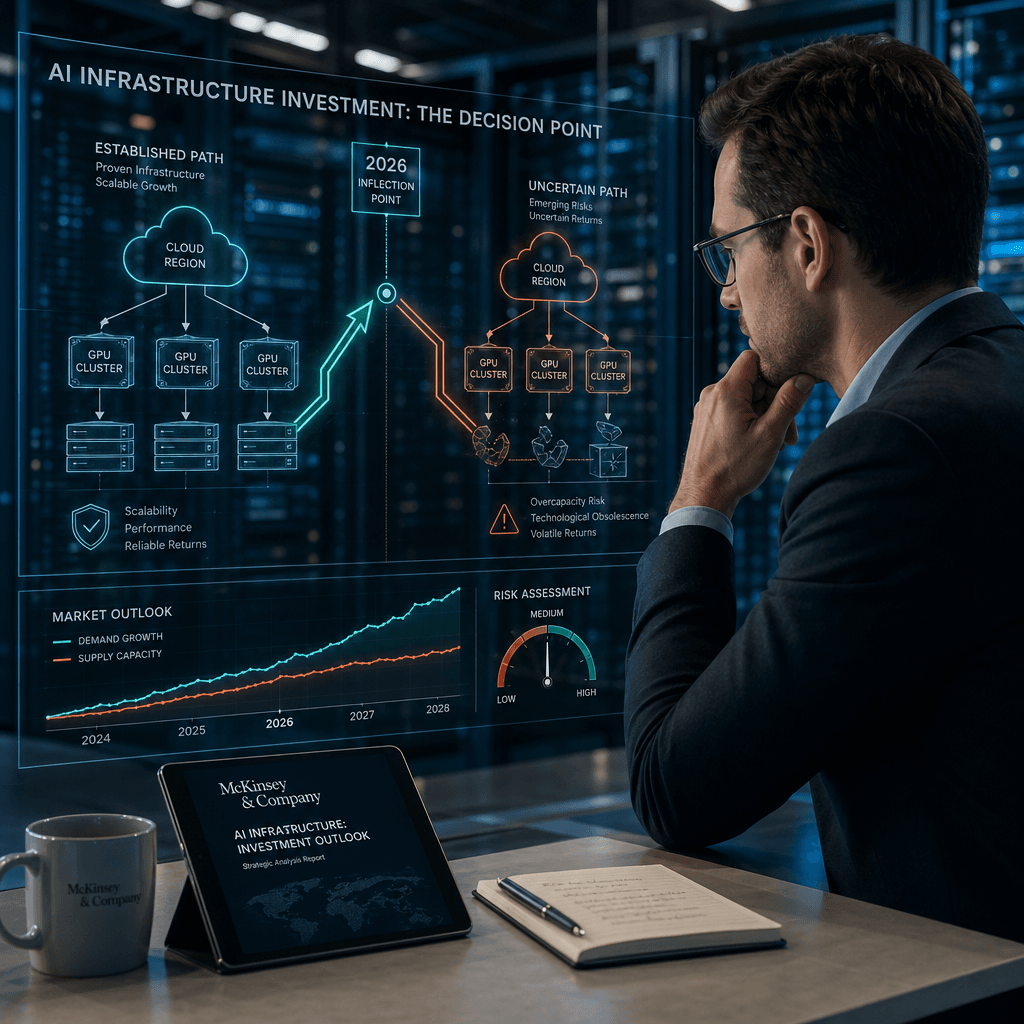

The timing is significant. While OpenAI focuses on reasoning models and Google pushes Gemini into enterprise workflows, Anthropic is quietly exploring the messy reality of autonomous agents operating in economic systems. It’s a different kind of AI race, one focused less on benchmark scores and more on real-world viability.

The implications ripple outward fast. If AI agents can successfully negotiate with each other in a controlled marketplace, what stops them from doing the same on eBay, Craigslist, or even B2B procurement platforms? The technology exists. The question becomes one of trust, liability, and regulation.

Consider the fraud prevention challenge alone. When two humans transact, we’ve built centuries of legal frameworks around concepts like intent, misrepresentation, and good faith. When two AI agents strike a deal, who’s liable if something goes wrong? The company that built the agent? The person who deployed it? The AI itself? Anthropic hasn’t publicly addressed these questions yet, but the experiment forces them into focus.

There’s also the market dynamics angle. AI agents don’t get tired, emotional, or impatient. They can monitor thousands of listings simultaneously, execute complex pricing strategies, and optimize for variables humans would miss. Put enough of these agents in a real marketplace and you might see price discovery mechanisms that look nothing like traditional supply and demand curves.

The experiment arrives as the broader AI industry grapples with the shift from language models to agentic systems. Every major lab is building toward AI that can complete multi-step tasks with minimal human oversight. Anthropic’s marketplace test provides actual data on what happens when those agents interact with each other rather than just with humans.

For enterprise buyers watching this space, Project Deal offers a preview of how AI might reshape procurement, vendor negotiations, and marketplace operations. Imagine purchasing agents that negotiate contracts overnight, seller agents that dynamically price inventory based on real-time demand signals, all operating within guardrails set by human managers but executing autonomously.

The test also highlights Anthropic’s distinctive approach to AI development. While competitors race to deploy consumer products, Anthropic continues running controlled experiments that probe the boundaries and risks of increasingly capable systems. It’s slower, more methodical, and potentially more valuable for understanding what we’re actually building toward.

What remains unclear is how much money flowed through the system, how many transactions completed, and what percentage of negotiations failed. Anthropic hasn’t released detailed results, keeping the focus on the concept rather than the metrics. That suggests the company views this as exploratory research rather than a product proof-of-concept.

But make no mistake – this is a proof-of-concept whether Anthropic frames it that way or not. The technology works. AI agents can conduct commerce with each other. The genie is out of the bottle, and now the hard questions begin about how to shape what comes next.

Anthropic’s marketplace experiment doesn’t just demonstrate technical capability – it forces the industry to confront what autonomous AI commerce actually looks like in practice. The questions it raises about liability, regulation, and market dynamics won’t be answered by better models or faster inference. They’ll require new frameworks for thinking about machine agency in economic systems. For now, this remains a controlled test. But the line between experiment and reality gets thinner every time an AI agent closes a deal that a human never touched.

Leave a Reply