- ■

- ■

Amazon stock reached record highs on the news, with investors betting AWS custom silicon can capture AI workload share from Nvidia’s data center GPU monopoly

- ■

The partnership gives Meta crucial AI compute capacity while reducing dependence on Nvidia’s constrained supply chain and premium pricing

- ■

AWS now has proof its custom chips can handle Meta-scale AI training, potentially accelerating enterprise adoption of Trainium and Inferentia

Amazon just scored a major validation for its custom chip strategy, and Wall Street noticed. The company’s stock hit an all-time high Friday after Meta revealed it’s deploying Amazon Web Services’ homegrown Trainium and Inferentia processors to power its AI infrastructure. The deal marks a turning point in the AI chip wars, proving hyperscalers can build credible alternatives to Nvidia‘s dominance while Meta gets access to scarce compute capacity.

Amazon doesn’t just want to rent you cloud servers anymore – it wants to design the chips inside them. And Meta just became the poster child for why that strategy might work.

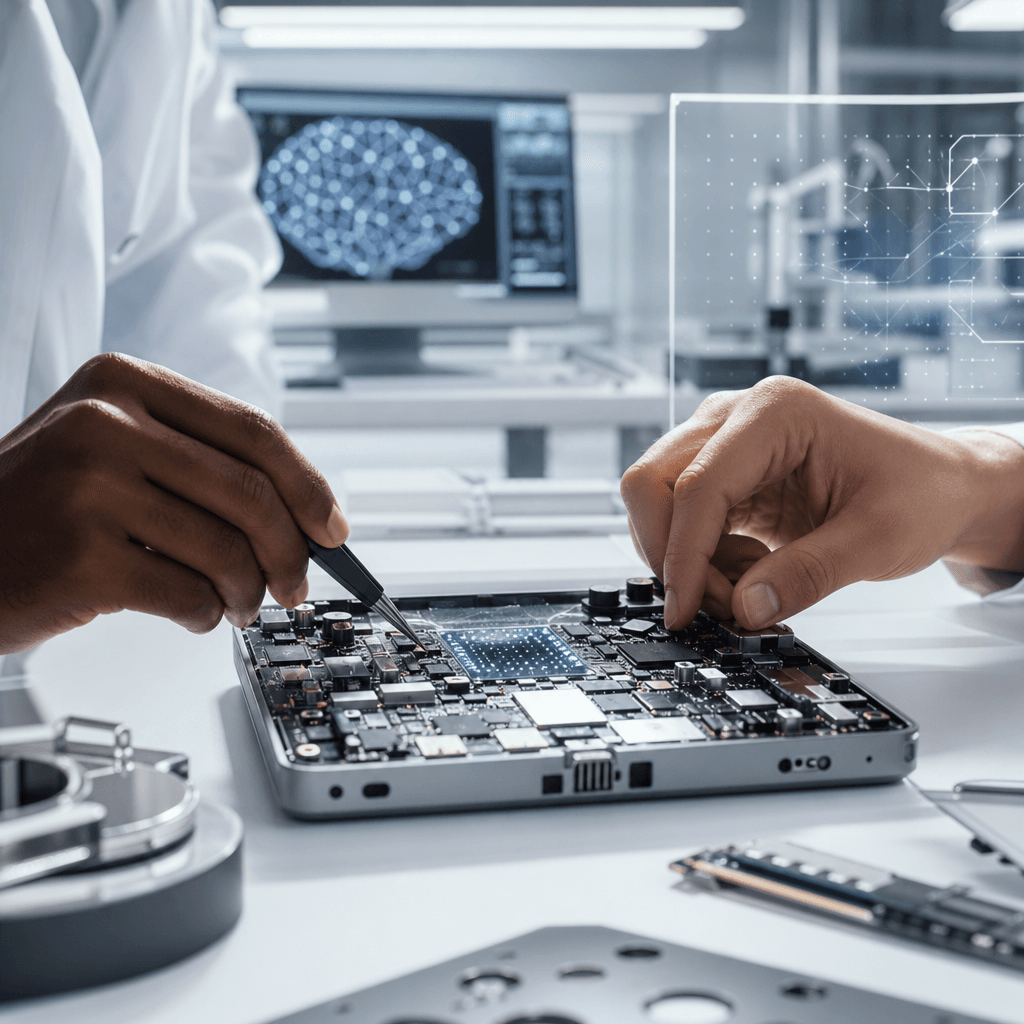

The social media giant’s decision to tap AWS’s custom Trainium training chips and Inferentia inference processors represents a watershed moment for Amazon’s semiconductor ambitions. While Google has been running AI workloads on its own TPUs for years and Microsoft recently unveiled its Maia chip, Amazon needed a marquee customer willing to bet big on non-Nvidia silicon. Meta just volunteered.

Investors responded immediately. Amazon shares surged to record territory in Friday trading as the market digested what this means – AWS isn’t just winning cloud deals, it’s potentially breaking the stranglehold Nvidia has maintained over AI infrastructure. For a company that’s poured billions into custom chip development since acquiring Annapurna Labs in 2015, this is the validation Jeff Bezos bet on.

The economics tell the story. Meta has been scrambling to secure GPU capacity as it races to build out AI infrastructure for everything from recommendation algorithms to its nascent metaverse ambitions. Nvidia’s H100 and newer H200 chips remain backordered for months, with hyperscalers and enterprises locked in a bidding war for scarce supply. AWS’s custom chips offer Meta a way around that bottleneck while likely delivering significant cost savings compared to Nvidia’s premium pricing.

But this isn’t just about saving money or securing capacity. Meta’s endorsement gives Amazon something it desperately needs – technical credibility. When one of the world’s most demanding AI operators says your custom silicon can handle production workloads at scale, enterprise customers start paying attention. AWS has been pitching Trainium and Inferentia for years with modest uptake. Now it has a reference customer that processes billions of AI inferences daily.

The chip specs matter here. Amazon’s Trainium chips are purpose-built for training large language models and other AI systems, competing directly with Nvidia’s pricey A100 and H100 GPUs. Inferentia focuses on inference – actually running trained models in production – where cost per operation becomes critical at Meta’s scale. AWS claims both deliver better price-performance than comparable Nvidia offerings, though benchmarks vary wildly depending on the workload.

What’s remarkable is the speed of this shift. Just two years ago, custom AI chips from cloud providers were viewed as science projects – interesting experiments that would never challenge Nvidia’s CUDA software ecosystem and developer mindshare. OpenAI runs on Microsoft Azure using Nvidia chips. Anthropic signed a massive AWS deal but the chip details remained vague. Meta choosing to explicitly highlight its use of Amazon’s custom silicon signals the market is fragmenting faster than anyone expected.

The competitive implications ripple outward. Google Cloud has been pushing its TPU chips for years with limited success outside Alphabet’s own products. Microsoft’s Maia chips are still rolling out. Now AWS has Meta as proof that custom silicon can work at the highest tier of AI deployment. That puts pressure on every cloud provider to articulate their chip strategy and gives enterprises permission to consider alternatives to Nvidia’s ecosystem.

For Meta, the calculus is straightforward. The company has already invested heavily in custom chips for its data centers, including its own AI training and inference accelerators. Partnering with AWS on Trainium and Inferentia lets Meta access additional capacity without building more data centers while maintaining flexibility across multiple silicon providers. It’s the kind of supply chain diversification that became critical after the chip shortages of recent years.

Amazon hasn’t disclosed pricing for Trainium or Inferentia instances, but the economics need to work for both sides. Meta gets compute capacity and likely meaningful savings versus Nvidia GPUs. AWS gets utilization of custom chips it’s already manufacturing, margin improvement over reselling Nvidia hardware, and the strategic win of locking in one of tech’s biggest infrastructure spenders.

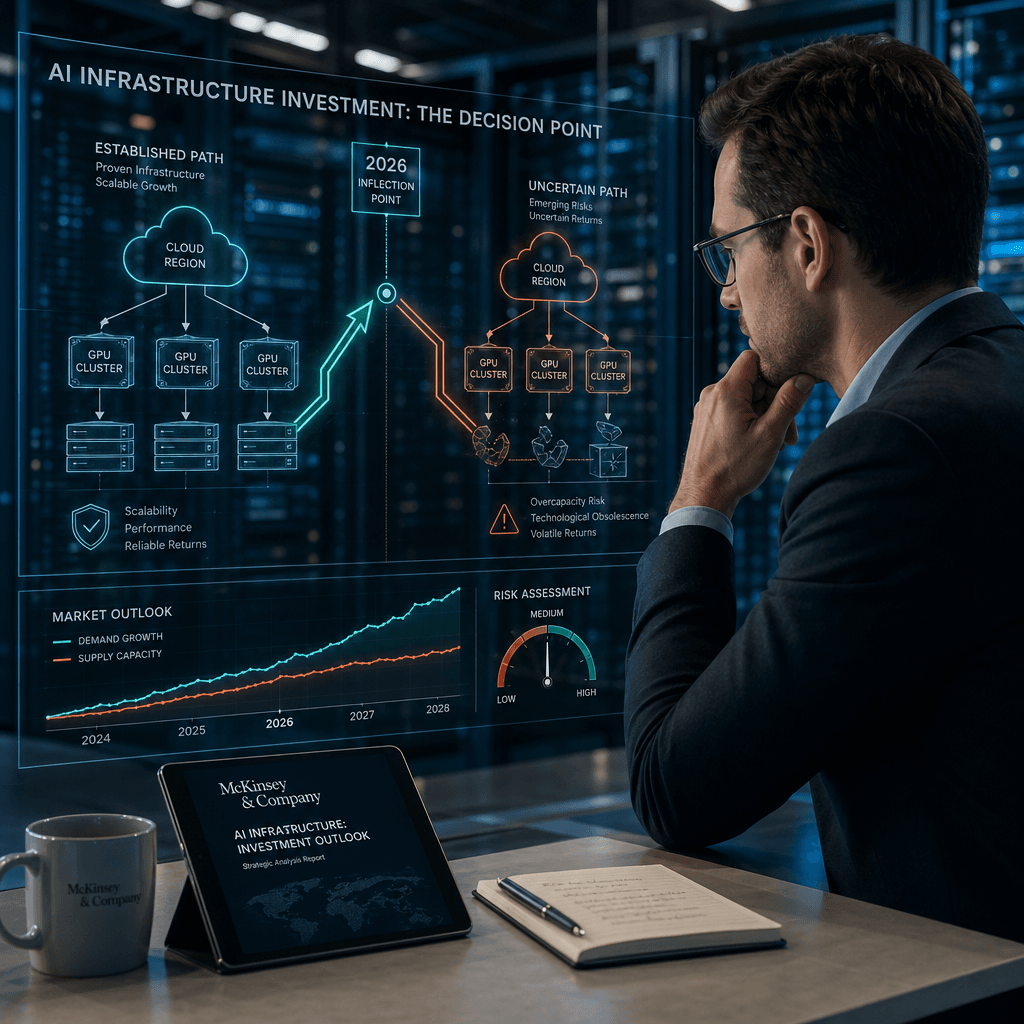

The deal also highlights how the AI infrastructure market is splintering into distinct layers. Nvidia still dominates the bleeding-edge training of frontier models – the GPT-5s and Gemini Ultras of the world. But for inference workloads, fine-tuning, and smaller model training, custom chips are increasingly viable. Meta likely isn’t replacing all its Nvidia GPUs overnight. It’s adding AWS custom silicon to a diversified portfolio.

What happens next will determine whether this is a one-off partnership or the beginning of a broader industry shift. If Meta reports strong performance and cost savings from AWS chips, expect other large-scale AI operators to follow. If issues emerge with software compatibility, developer tools, or performance, Nvidia’s moat remains intact. AWS needs to prove its chips aren’t just good enough – they need to be compelling enough to overcome the switching costs and ecosystem lock-in Nvidia has spent years building.

Amazon’s custom chip strategy just graduated from ambitious experiment to market-proven alternative. Meta’s endorsement doesn’t dethrone Nvidia overnight, but it cracks open a door that every cloud provider and chip startup will now try to rush through. For enterprises watching their AI infrastructure costs spiral, the message is clear – you might finally have negotiating leverage against Nvidia’s premium pricing. And for AWS, this is the kind of strategic win that justifies years of R&D spending while opening up a potentially massive new revenue stream as AI workloads explode. The AI chip wars just got a lot more interesting.

Leave a Reply