- ■

Anthropic continues high-level talks with Trump administration despite recent Pentagon supply-chain designation, according to TechCrunch

- ■

The diplomatic outreach follows CEO Dario Amodei’s White House meetings about the controversial Mythos AI project

- ■

Pentagon’s supply-chain risk label could block Anthropic from lucrative defense contracts, threatening its enterprise strategy

- ■

The thaw suggests both sides see value in maintaining dialogue as AI becomes central to national security infrastructure

Despite being flagged as a supply-chain risk by the Pentagon just weeks ago, Anthropic is actively engaging with senior Trump administration officials in what appears to be a diplomatic thaw. The AI startup behind Claude is navigating a precarious political landscape as it tries to repair relationships with defense officials while maintaining access to government contracts worth potentially billions. The move signals how critical federal partnerships have become for AI companies racing to dominate the enterprise market.

Anthropic finds itself in an awkward dance with Washington. The AI safety-focused startup that positioned itself as the responsible alternative to OpenAI now faces questions about its own security posture after the Pentagon quietly added it to a supply-chain risk list. But instead of retreating, CEO Dario Amodei and his team are doubling down on engagement.

The company’s ongoing conversations with senior Trump administration officials, including Chief of Staff Susie Wiles, reveal a careful repair strategy. It’s a high-stakes game—federal contracts and partnerships have become table stakes for AI companies trying to prove their technology works at scale. Microsoft learned this lesson early, embedding itself deeply into government operations. Now every serious AI player wants a piece of that action.

The Pentagon designation came as a shock to an industry that assumed Anthropic’s cautious approach to AI safety would insulate it from political scrutiny. But national security officials apparently see things differently. The supply-chain risk label doesn’t just carry reputational damage—it can effectively bar a company from defense work, cutting off access to some of the most lucrative and prestigious contracts in tech.

What triggered the designation remains murky. Industry insiders point to Anthropic’s complex funding structure, which includes substantial backing from Amazon and Google, both of which have their own complicated relationships with federal agencies. Others speculate it’s connected to the Mythos project, an ambitious AI initiative that reportedly caught President Trump’s attention—though he initially denied knowing about it during previous coverage.

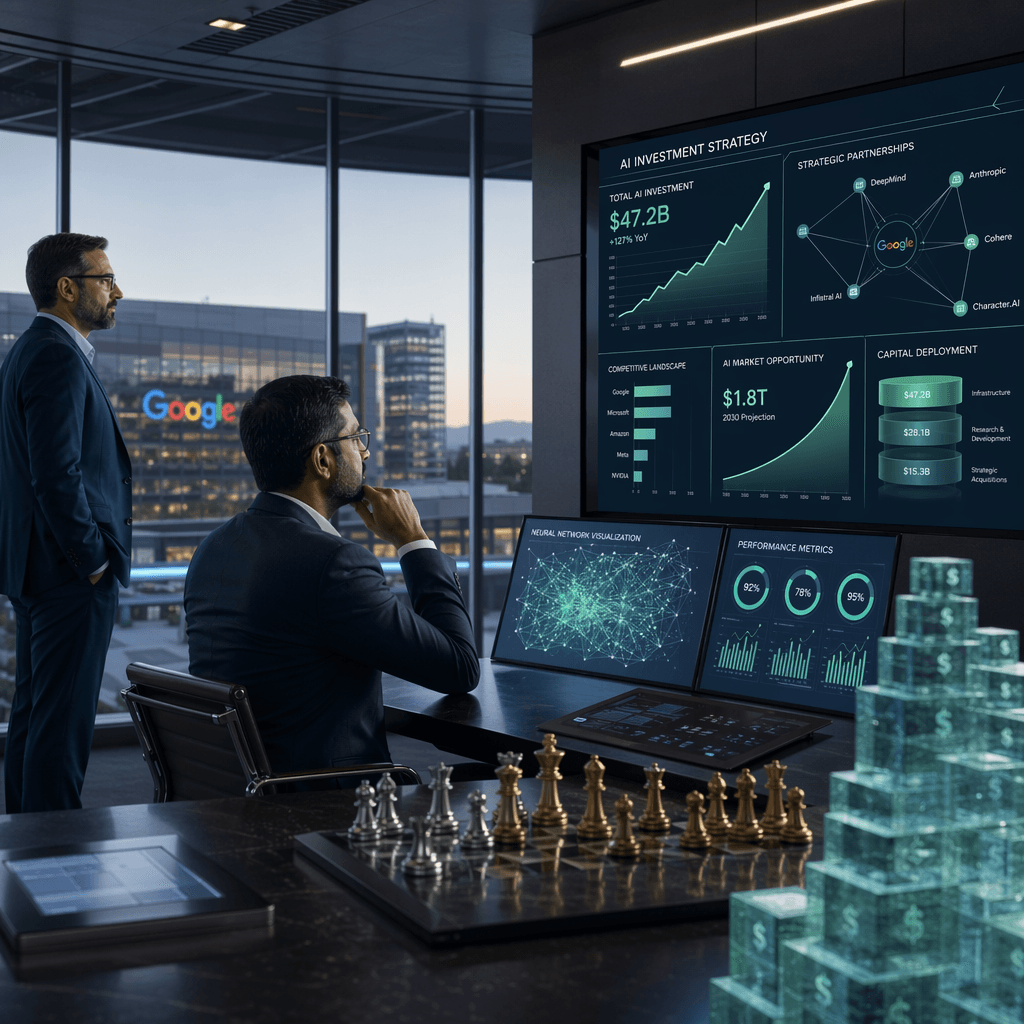

Amodei’s White House visits weren’t just courtesy calls. According to sources familiar with the discussions, Anthropic has been making the case that its constitutional AI approach—which bakes safety principles directly into model training—actually makes it more secure than competitors. It’s a clever pitch that reframes the company’s core differentiator as a national security asset rather than just an ethical stance.

The timing couldn’t be more critical for Anthropic. The company is reportedly raising fresh capital at a valuation north of $40 billion, and government credibility matters to institutional investors. A prolonged freeze-out from federal contracts would raise uncomfortable questions about whether Anthropic can compete with OpenAI, which has cultivated its own Pentagon relationships, and Microsoft, which already powers much of the government’s cloud infrastructure.

Trump administration officials appear willing to listen, which itself signals something significant. The White House could have easily maintained distance from a company carrying Pentagon baggage. Instead, the continued dialogue suggests both sides see a path forward—and recognize that freezing out one of America’s leading AI companies might hand advantages to international competitors.

The enterprise AI market is watching closely. If Anthropic successfully navigates this mess, it demonstrates that even serious regulatory or security concerns can be managed with the right mix of technical credibility and political savvy. If the relationship stays frozen, it sends a chilling message to other AI startups about the risks of running afoul of national security apparatus.

What happens next likely depends on what Anthropic offers in return for rehabilitation. Will the company agree to additional security audits? Restructure its governance? Accept limits on certain partnerships or customers? The answers will shape not just Anthropic’s future but potentially set precedents for how Washington handles the entire AI industry.

For now, the thaw remains tentative. But the fact that conversations continue—despite the supply-chain designation remaining in place—suggests neither side wants a permanent break. In an industry moving as fast as AI, even a few months of frozen relationships can mean falling behind. Anthropic is betting it can warm things up before that happens.

Anthropic’s balancing act with the Trump administration reveals just how intertwined AI development and national security have become. The company’s ability to maintain dialogue despite Pentagon concerns shows that Washington understands it can’t afford to alienate America’s leading AI builders—even when security questions arise. But this thaw comes with strings attached. Whatever commitments Anthropic makes to get back in the government’s good graces will likely set the template for how other AI companies navigate similar scrutiny. For an industry that’s moved fast and broken things for years, learning to move carefully through Washington’s bureaucracy might be the hardest challenge yet.

Leave a Reply