- ■

DeepSeek released a preview version of its V4 large language model, per CNBC

- ■

The release intensifies competition in China’s AI sector as local startups race to match OpenAI and Google’s capabilities

- ■

DeepSeek’s open-source approach positions it as a challenger to proprietary Western models amid ongoing tech tensions

- ■

Industry watchers will monitor V4’s performance benchmarks and whether it can match GPT-4 class capabilities

Chinese AI startup DeepSeek just released a preview of its V4 large language model, marking the company’s latest salvo in the increasingly heated global AI arms race. The release comes as China’s homegrown AI players rush to close the gap with U.S. giants like OpenAI and Anthropic, with DeepSeek positioning itself as a key open-source alternative. The move signals Beijing-backed firms are doubling down on LLM development despite ongoing U.S. chip export restrictions that have complicated access to cutting-edge hardware.

DeepSeek just threw down the gauntlet in the global AI race. The Chinese startup released a preview version of its V4 large language model on Friday, according to CNBC, delivering on months of anticipation from developers and researchers tracking China’s push to achieve AI parity with Silicon Valley.

The timing couldn’t be more pointed. While OpenAI and Google dominate headlines with their proprietary models, DeepSeek’s been carving out a distinct niche as China’s most prominent open-source LLM developer. The V4 preview suggests the company’s betting that transparency and community-driven development can outflank the closed ecosystems of Western competitors.

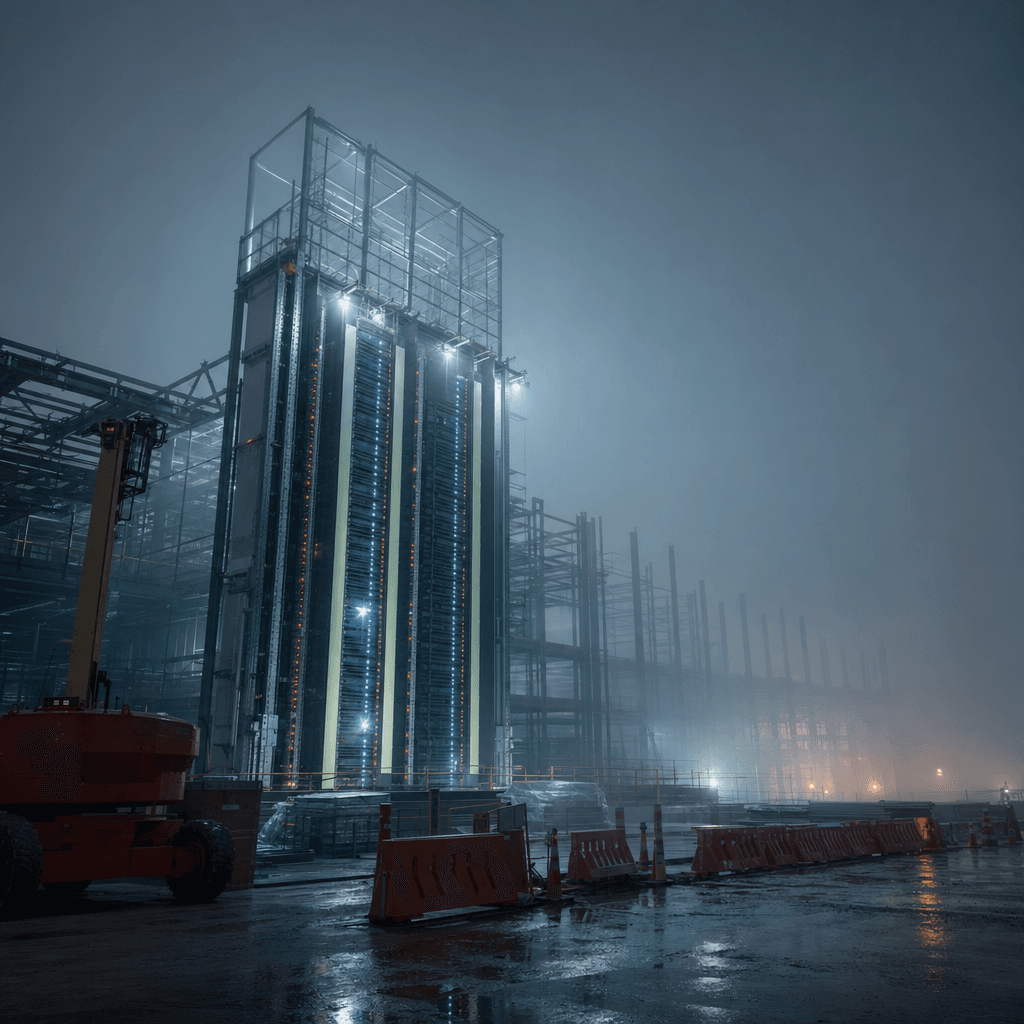

What makes this release particularly significant is the context. Chinese AI firms have been operating under intense pressure from U.S. export controls that limit access to Nvidia’s most advanced chips – the GPUs that typically power frontier model training. DeepSeek’s ability to ship a competitive V4 preview indicates Chinese companies are finding workarounds, whether through stockpiled hardware, alternative chip architectures, or more efficient training methods that squeeze more performance from less cutting-edge silicon.

The preview designation is telling. It means developers and enterprises can start testing V4’s capabilities now, but the full production release is still to come. This phased rollout mirrors strategies used by Meta with its Llama models and Mistral AI in Europe – build community momentum early, gather real-world feedback, and create ecosystem lock-in before the official launch.

DeepSeek’s earlier V3 model earned respect in AI circles for punching above its weight on reasoning tasks and code generation, often matching models trained with far larger budgets. If V4 delivers meaningful improvements in areas like multi-step reasoning, context window length, or multilingual performance, it could force Western labs to take China’s open-source efforts more seriously.

The competitive dynamics are shifting fast. While OpenAI keeps GPT-5 development under wraps and Anthropic iterates on Claude, China’s ecosystem is flooding the zone with alternatives. Alibaba’s Qwen models, Baidu’s ERNIE, and now DeepSeek’s V4 create a multi-pronged challenge that’s harder for any single Western player to counter.

For developers, the calculation is straightforward – if DeepSeek V4 delivers comparable performance to GPT-4 class models but with open weights and no API rate limits, it becomes an attractive option for companies nervous about vendor lock-in or data privacy concerns with U.S. cloud providers.

The geopolitical subtext is impossible to ignore. Beijing has made AI self-sufficiency a national priority, pouring resources into domestic chip manufacturing and model development. DeepSeek’s progress demonstrates that export controls, while slowing China’s AI advancement, haven’t stopped it. If anything, restrictions may be accelerating innovation in efficiency and alternative approaches.

What remains unclear is V4’s actual performance. Preview releases often showcase cherry-picked capabilities while obscuring weaknesses. The AI community will scrutinize benchmarks on standardized tests like MMLU, HumanEval for coding, and reasoning challenges. If DeepSeek’s claims hold up under independent evaluation, it’ll mark a genuine inflection point in the AI race.

The open-source angle also matters for smaller players and startups globally. Companies in regions with limited access to U.S. cloud services or budget constraints for API costs could gravitate toward DeepSeek if V4 proves reliable. That network effect could shift market share away from the San Francisco-centric AI establishment faster than anyone expects.

DeepSeek’s V4 preview isn’t just another model release – it’s a signal that China’s AI ecosystem is maturing faster than Western observers expected. Whether V4 lives up to the hype will depend on independent benchmarks and real-world performance over the coming weeks. But the broader trend is undeniable: the AI race is becoming genuinely multipolar, and assumptions about inevitable U.S. dominance are looking shakier by the quarter. For developers, enterprises, and policymakers alike, DeepSeek’s latest move demands attention. The question isn’t whether China can build competitive LLMs anymore – it’s how quickly they’ll close the remaining gaps.

Leave a Reply