- ■

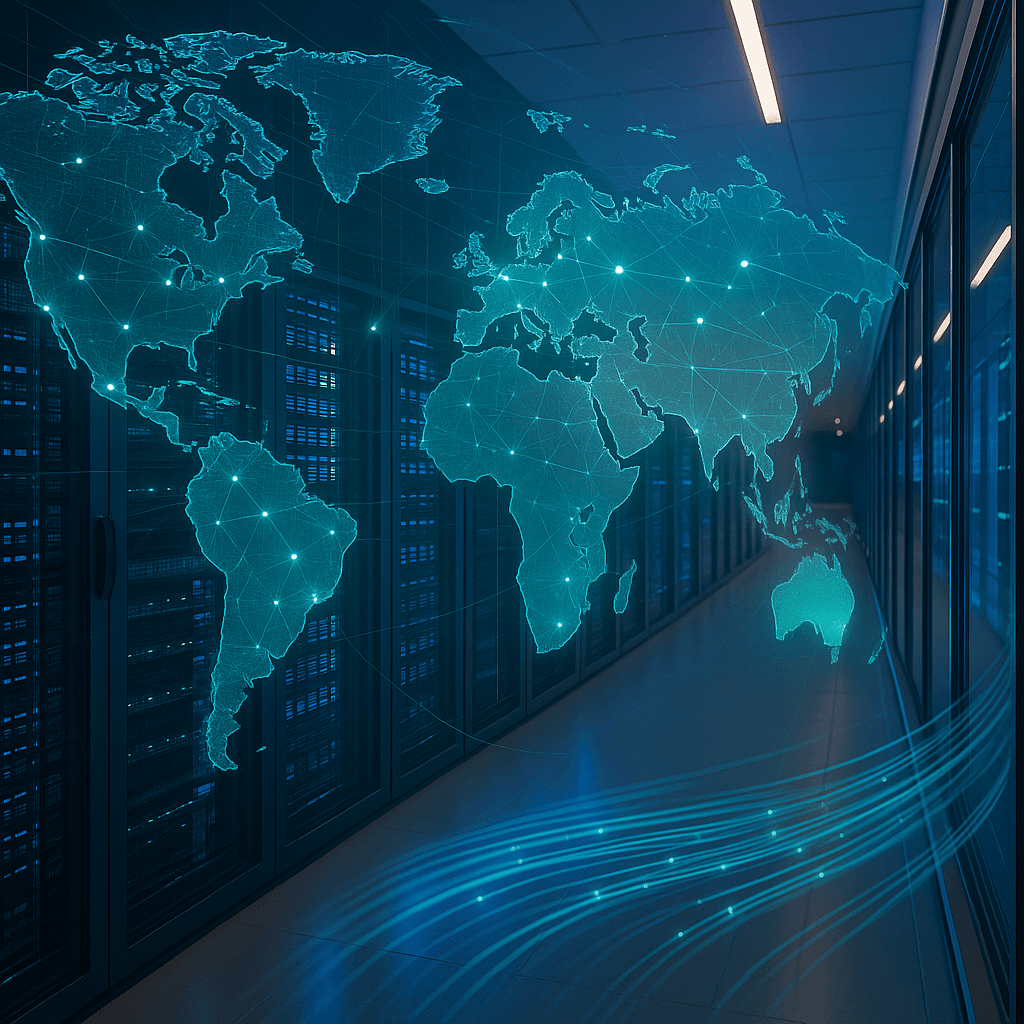

Insurance firms face mounting pressure as private capital floods AI data centers, challenging traditional risk assessment frameworks according to CNBC reporting

- ■

GPU-heavy facilities present unique underwriting challenges due to rapid depreciation cycles and unproven long-term reliability of AI-specific hardware

- ■

The infrastructure boom exposes gaps in how insurers value assets that can become technologically obsolete within 18-24 months

- ■

Private equity and venture capital rushing into data center deals are forcing insurers to develop entirely new actuarial models for AI-era infrastructure

The AI infrastructure gold rush is forcing insurers to scramble. As billions pour into data centers packed with cutting-edge GPUs, traditional risk models are breaking down under the weight of unprecedented capital deployment and rapid technological obsolescence. The collision between old-school insurance and bleeding-edge AI infrastructure is creating a new battleground where underwriters must price assets that didn’t exist two years ago and might be outdated in two more.

The AI data center boom is putting insurance companies through a stress test they never anticipated. As private capital races to fund the infrastructure powering everything from OpenAI‘s ChatGPT to enterprise AI deployments, insurers are grappling with a fundamental question: how do you price risk for assets that barely existed a year ago?

Traditional data centers were relatively straightforward to insure. Predictable power consumption, well-understood cooling systems, and hardware that depreciated on known schedules. But AI-optimized facilities are different beasts entirely. A single rack of Nvidia H100 GPUs can draw more power than a small office building, generate extraordinary heat, and carry a price tag running into millions – while facing potential technological obsolescence before the insurance policy expires.

The capital influx is staggering. Private equity firms, sovereign wealth funds, and specialized infrastructure investors are pouring billions into building and retrofitting facilities to handle AI workloads. These deals often involve complex financing structures where GPUs themselves serve as collateral, creating a novel asset class that insurers must evaluate. It’s not just about insuring the building anymore – it’s about underwriting the computational capacity inside it.

What’s keeping underwriters up at night is the depreciation curve. A traditional server might lose value predictably over five to seven years. But Nvidia‘s GPU roadmap suggests new chip architectures every 12-18 months, each delivering step-function improvements in performance per watt. That means today’s cutting-edge H100 cluster could be economically obsolete well before it’s physically worn out. How do you write a policy when the insured asset might lose half its market value not from damage, but from announcing a better chip?

Leave a Reply