A former Facebook insider is bringing Meta-grade content moderation to the AI era. Moonbounce just closed a $12 million funding round to scale its AI control engine that transforms traditional content policies into predictable, automated AI guardrails. As companies rush to deploy AI assistants and chatbots, the startup is betting that enterprise trust and safety infrastructure will become as critical as the models themselves.

Moonbounce is bringing the content moderation playbook from social media giants into the generative AI era, and investors just bet $12 million it’ll work. The startup announced its Series A round as companies deploying AI assistants, chatbots, and automated systems grapple with a messy reality – their AI models don’t follow content policies the way humans do.

The timing couldn’t be sharper. While Meta, Google, and other tech giants spent decades building sophisticated trust and safety systems for user-generated content, the current AI boom caught most enterprises flat-footed. Startups and established companies alike are shipping AI products with rudimentary guardrails, hoping nothing goes catastrophically wrong.

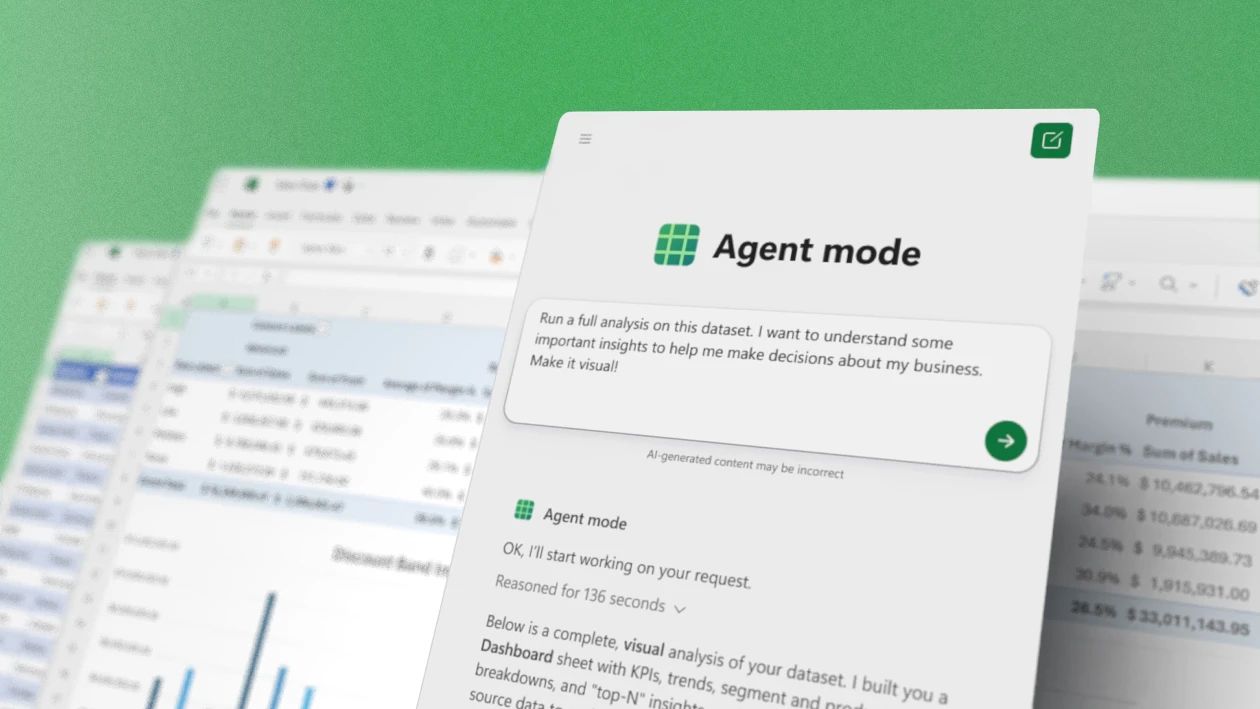

Moonbounce’s founder, who previously worked inside Meta’s content moderation infrastructure, saw this gap firsthand. The company’s core insight is deceptively simple – translate the complex policy frameworks that govern social media into machine-readable rules that AI systems can consistently enforce. It’s the difference between writing a 50-page content policy document and actually getting an AI to follow it in real-time across millions of interactions.

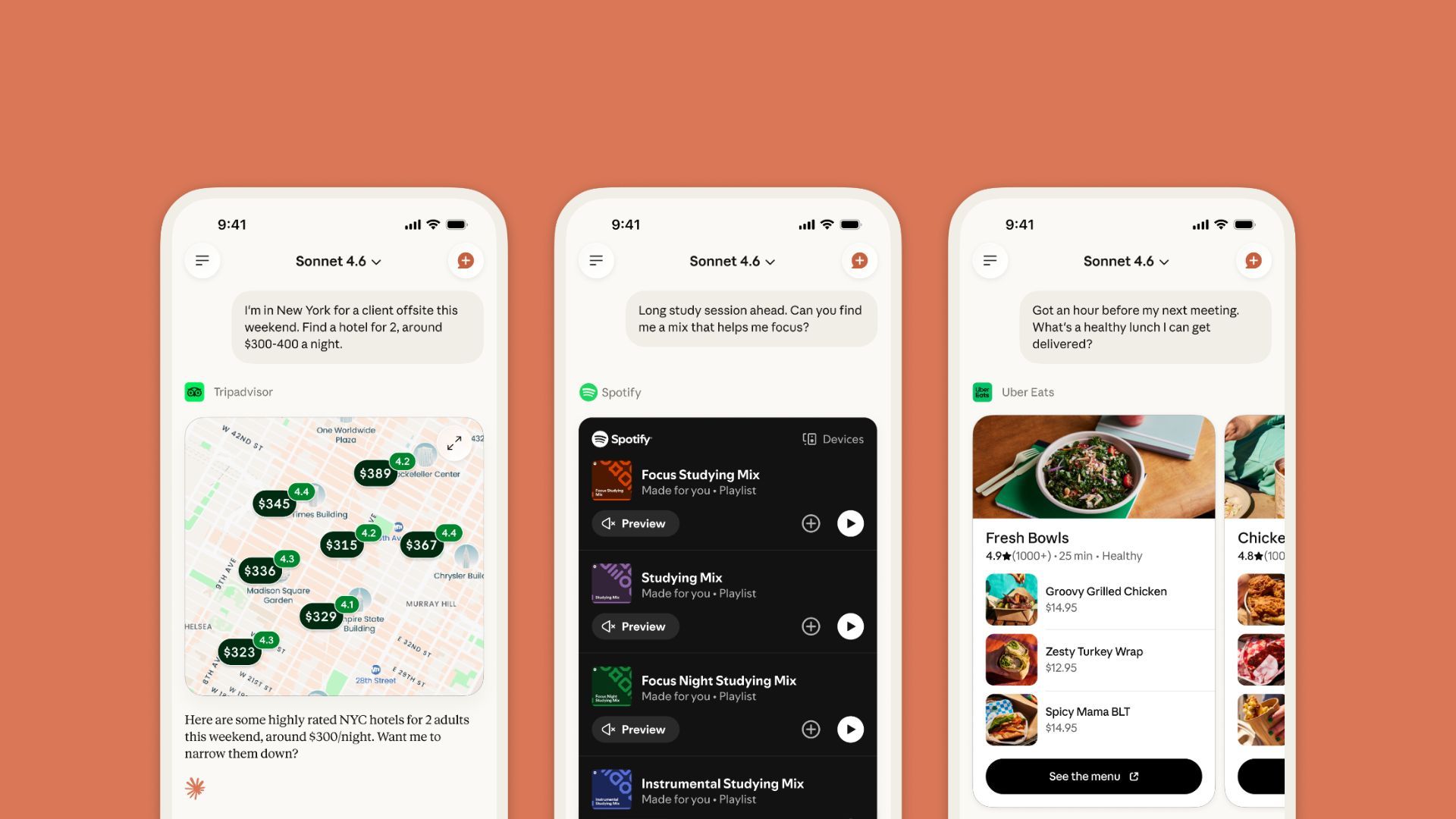

The platform works as a control layer that sits between companies’ AI models and their end users. When a business defines what content is acceptable – whether that’s filtering financial advice, blocking certain political topics, or preventing the AI from roleplaying as real people – Moonbounce converts those policies into technical guardrails. The system then monitors AI outputs in real-time, catching violations before they reach users.

This matters because current AI models are notoriously inconsistent. Ask the same sensitive question three different ways and you might get three different responses – one compliant, one borderline, one completely off the rails. For enterprises deploying customer-facing AI, that inconsistency represents legal liability, brand risk, and regulatory headaches.

Leave a Reply