- ■

Waymo launched its World Model using Google DeepMind‘s Genie 3 to generate hyper-realistic simulations for autonomous vehicle testing

- ■

The system can simulate extreme edge cases like tornadoes, flooded streets, wildfires, and wildlife encounters that robotaxis rarely face in real-world operations

- ■

Three control mechanisms – driving action, scene layout, and language control – allow developers to customize road conditions, weather, lighting, and ‘what if’ counterfactuals

- ■

Waymo can convert real dashcam footage into simulated environments and run scenarios at 4X speed without losing image quality

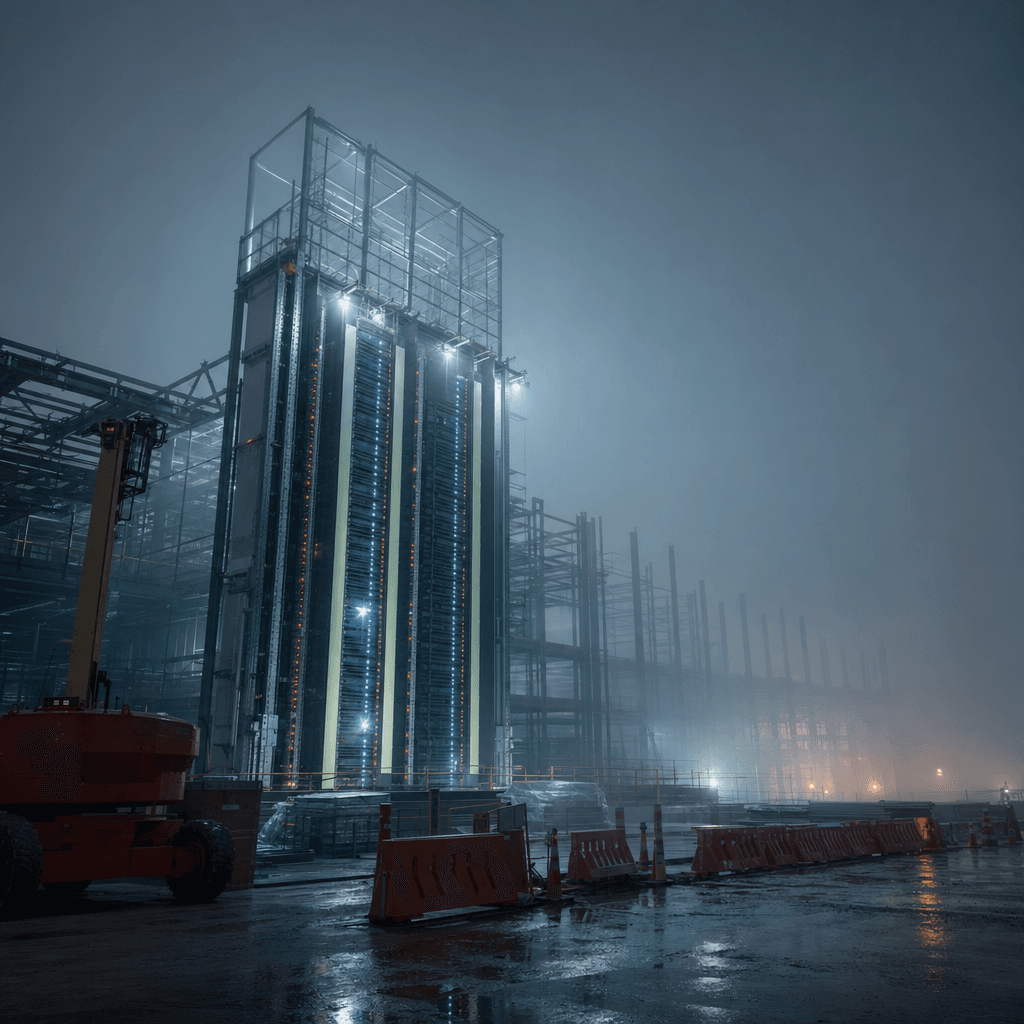

Waymo just unveiled a wild new testing ground for its self-driving cars – one where robotaxis can face down tornadoes, rogue elephants, and neighborhoods on fire. Built on Google‘s Genie 3 AI world model, the Waymo World Model generates photorealistic virtual environments to test autonomous vehicles in scenarios they’d rarely (or never) encounter on actual roads. According to Waymo’s blog post, the system can create “virtually any scene – from regular, day-to-day driving to rare, long-tail scenarios” across multiple sensor types, marking a significant leap in how AV companies prepare their fleets for the unexpected.

Waymo is taking its autonomous vehicle testing into some seriously extreme territory. The Google-owned self-driving car company just rolled out its World Model, a simulation platform built on Genie 3 that can conjure up virtually any driving scenario imaginable – including the kind of edge cases that would make most AV engineers lose sleep.

Picture this: a Waymo robotaxi cruising down a lonely highway when suddenly, a massive tornado materializes in the distance. Or imagine the same vehicle navigating a snow-covered Golden Gate Bridge, dodging furniture floating through a flooded suburban street, or encountering an elephant blocking the road. These aren’t hypothetical nightmares – they’re actual scenarios Waymo can now simulate using Google DeepMind‘s latest AI breakthrough.

The Waymo World Model represents a major evolution in how autonomous vehicle companies stress-test their technology. While simulation has always been critical to AV development – allowing companies to rack up billions of virtual miles without risking actual passengers – most existing platforms struggle to generate truly photorealistic environments that accurately mirror real-world sensor data. , ‘s AI world model that can generate interactive 3D spaces from text or image prompts, changes that equation dramatically.

Leave a Reply