Nvidia just opened the reasoning floodgates for autonomous vehicles. At CES 2026, the chip giant unveiled Alpamayo, a new family of open-source reasoning models that let self-driving cars think through complex, never-before-seen scenarios in real time. The move signals a major shift in how the industry approaches AV safety—moving from pattern recognition to actual reasoning.

Nvidia just made a serious play for the autonomous vehicle infrastructure war. The company’s unveiling of Alpamayo at CES 2026 isn’t just another AI model announcement—it’s a signal that the self-driving car industry is ready to move beyond neural networks trained on millions of hours of driving footage.

At its core, Alpamayo is a 10-billion-parameter reasoning model that works like a chain-of-thought system for cars. Instead of simply recognizing a traffic light or pedestrian and responding reflexively, the model can think through rare edge cases, break down decision-making into steps, and explain its reasoning. This matters because the hardest part of autonomous driving isn’t handling normal traffic. It’s handling the weird stuff—a traffic light that’s gone out at a busy intersection, construction that’s thrown off lane markings, or a pedestrian behaving unpredictably.

“The ChatGPT moment for physical AI is here,” Nvidia CEO Jensen Huang said in a statement. “When machines begin to understand, reason, and act in the real world.” During his keynote on Monday, Huang explained the architecture more directly: “Not only does Alpamayo take sensor input and activate steering wheel, brakes and acceleration, it also reasons about what action is about to take. It tells you what action is going to take, the reasons by which it came about that action. And then, of course, the trajectory.”

What makes this genuinely different is that the model can solve problems it’s never encountered before. It doesn’t need 10 million examples of traffic light failures to know how to handle one. Instead, it reasons through the scenario using the same kind of step-by-step logic humans use. Ali Kani, Nvidia’s vice president of automotive, explained it during a press briefing: “It does this by breaking down problems into steps, reasoning through every possibility, and then selecting the safest path.”

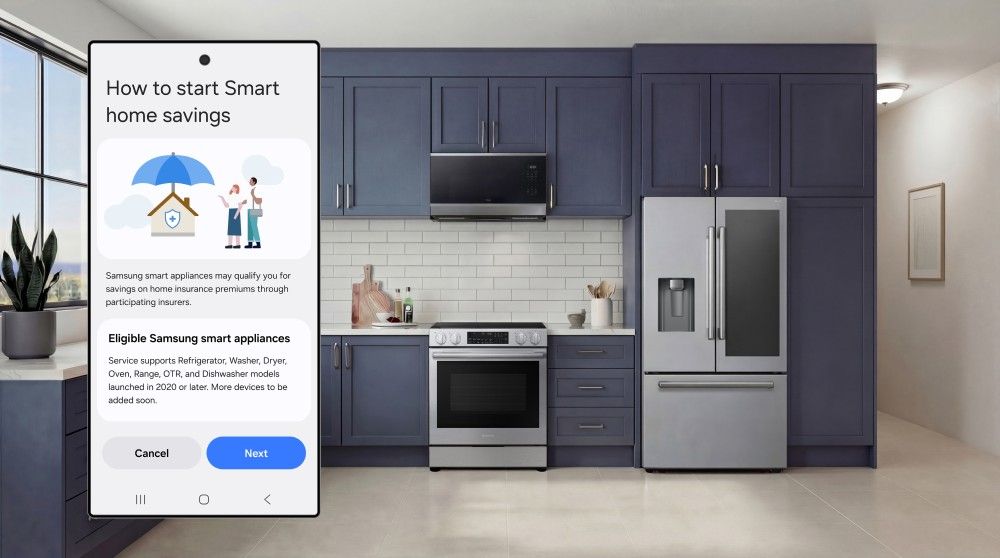

The real power play here is the open-source strategy. Nvidia is releasing Alpamayo 1’s underlying code on Hugging Face, letting developers fine-tune it into smaller, faster versions tailored for specific vehicles. This isn’t Nvidia gatekeeping its silicon advantage—it’s Nvidia becoming the infrastructure layer that everyone building autonomous vehicles depends on. Developers can use it to train simpler driving systems, build auto-labeling tools that tag video data automatically, or create evaluators that assess whether a car made the right call.

Leave a Reply