- ■

Google is testing AI headline replacement in Google Discover that creates misleading clickbait

- ■

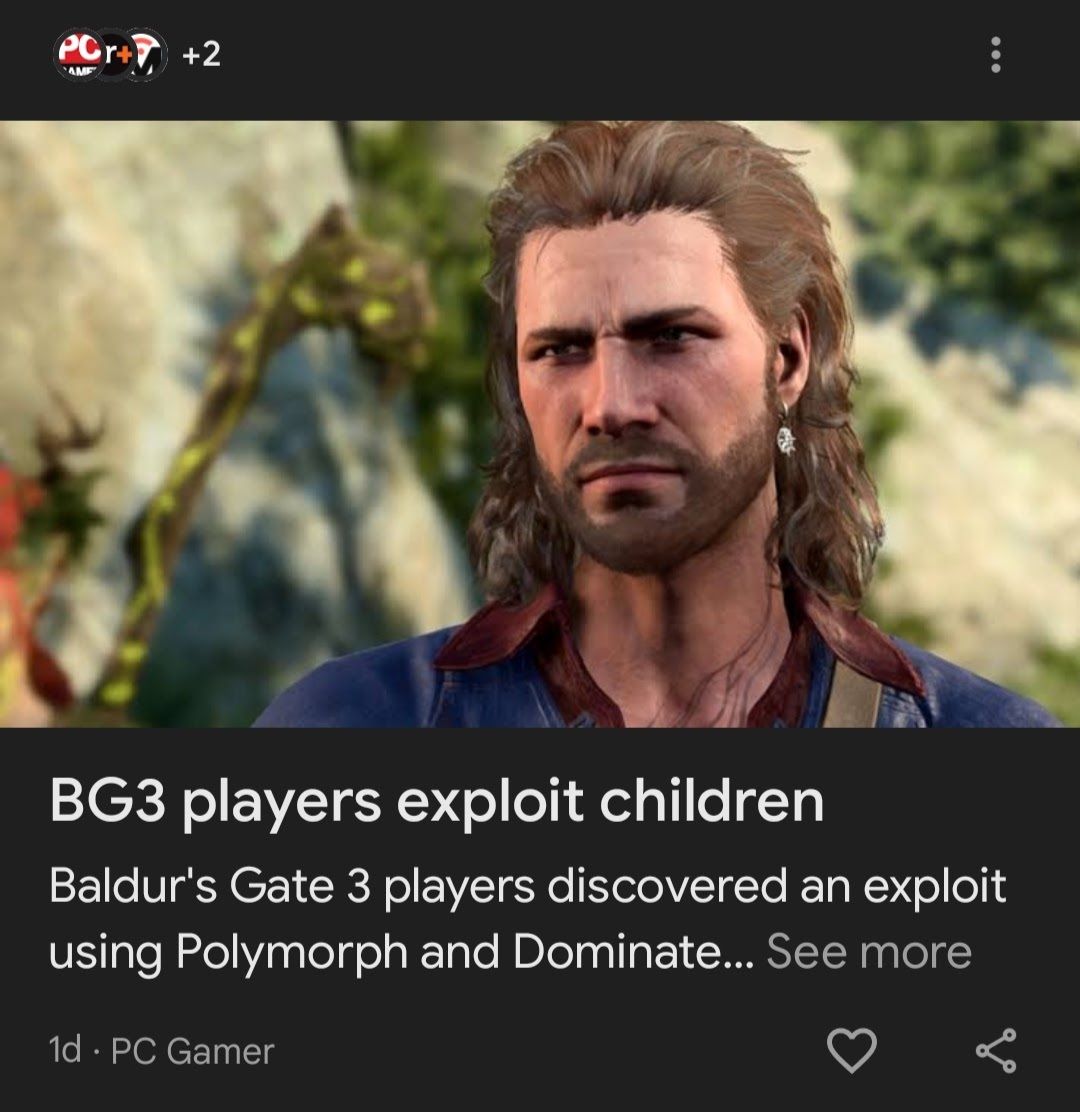

Examples include “BG3 players exploit children” and “Steam Machine price revealed” – both inaccurate summaries

- ■

Publishers lose control over how their content is marketed to readers

- ■

Google confirms it’s a “small UI experiment” that could be scrapped based on feedback

Google is quietly experimenting with AI-generated headlines in Google Discover, replacing journalists’ carefully crafted titles with misleading clickbait nonsense. The feature, spotted by The Verge’s Sean Hollister, transforms legitimate stories into confusing phrases like “BG3 players exploit children” and “Steam Machine price revealed” – neither of which accurately reflect the actual content. The experiment raises serious questions about Google’s role as a news gatekeeper and its impact on publishers’ editorial control.

Google just crossed a line that has publishers seeing red. The search giant is experimenting with AI-generated headlines in Google Discover that transform carefully crafted news stories into misleading clickbait – and journalists are not having it. The feature, discovered by The Verge’s Sean Hollister during his regular Google Discover browsing, replaces original headlines with AI-generated alternatives that often completely misrepresent the underlying stories. “BG3 players exploit children” appeared as a headline for a gaming story, while “Steam Machine price revealed” promoted an article that explicitly stated Valve won’t reveal pricing until next year. The AI seems obsessed with condensing complex stories into four words or less, creating headlines that range from misleading to completely nonsensical. “Schedule 1 farming backup” and “AI tag debate heats” were among the cryptic phrases that left readers scratching their heads. But the problem runs deeper than bad headlines. Google is effectively hijacking publishers’ marketing efforts, taking away their ability to control how stories are presented to readers. As Hollister points out, it’s like writing a book only to have the bookstore replace the cover without permission. The AI makeover transforms responsible journalism into apparent clickbait, potentially damaging publishers’ reputations. When readers see “Microsoft developers using AI” next to The Verge’s byline, they might assume the publication wrote that vapid headline – not knowing Google’s AI stripped away the crucial word “How” that made the original title meaningful. Google does include a disclosure that content is “Generated with AI, which can make mistakes,” but users only see this warning if they tap “See more.” The minimal labeling leaves most readers unaware that headlines have been artificially generated. Google spokesperson Mallory Deleon confirmed to The Verge that the company is running “a small UI experiment for a subset of Discover users” to test “a new design that changes the placement of existing headlines to make topic details easier to digest.” The corporate speak doesn’t mask what’s really happening: Google is prioritizing its own algorithmic preferences over editorial judgment. This experiment arrives as faces mounting criticism from publishers about its impact on web traffic. The company’s AI Overviews and other features have been accused of reducing clicks to news sites, contributing to what Google itself admitted in court is “the rapid decline” of the open web. Publishers are already struggling with – the phenomenon where Google answers questions directly without sending users to source websites. Now they’re watching Google actively rewrite their headlines in ways that could confuse or mislead their audiences. The timing couldn’t be worse for news organizations. recently launched a subscription service partly because traditional web traffic models are breaking down under Google’s algorithmic changes. Other publishers are exploring similar strategies, recognizing they can’t rely on Google’s whims for their survival. While some AI-generated headlines in the experiment are relatively harmless – “Origami model wins prize” and “Hyundai gain share” – the failures are spectacular enough to raise alarm. When AI transforms “Valve’s Steam Machine looks like a console, but don’t expect it to be priced like one” into “Steam Machine price revealed,” it’s not just bad editing – it’s misinformation. Early reactions from journalists have been swift and negative. Tom Warren, whose story about developer AI usage was butchered by the algorithm, offered a succinct response: “lol wtf Google.” The experiment highlights a fundamental tension in the modern media ecosystem. wants to optimize content for engagement and brevity, while publishers want to maintain editorial integrity and accurately represent their reporting. When those goals clash, readers suffer the consequences. For now, the feature remains experimental, and Google could pull it based on feedback. But the fact that it exists at all signals Google’s willingness to override editorial decisions in pursuit of algorithmic optimization. Publishers who’ve spent years perfecting headline writing to balance accuracy, engagement, and SEO considerations now face the prospect of having their work rewritten by an AI that clearly doesn’t understand context or editorial responsibility.

Leave a Reply