Google just crossed a line that thousands of its employees warned against. The tech giant signed a classified deal allowing the US Department of Defense to use its AI models for “any lawful government purpose,” according to The Information. The timing couldn’t be more charged – the agreement surfaced less than 24 hours after Google workers sent CEO Sundar Pichai a letter demanding he block Pentagon AI access over fears the technology would be weaponized in “inhumane or extremely harmful ways.”

Google is back in the defense business, and this time there’s no turning back. The company signed a classified agreement with the Pentagon that grants the US Department of Defense access to its AI models for “any lawful government purpose” – language so broad it effectively gives military and intelligence agencies carte blanche to deploy Google’s technology however they see fit, according to The Information.

The deal lands like a bombshell just hours after Google employees made a last-ditch effort to stop exactly this scenario. On April 27th, workers sent CEO Sundar Pichai a letter demanding the company refuse Pentagon contracts, warning that military applications could lead to AI being used in “inhumane or extremely harmful ways.” Their concerns weren’t hypothetical – they were trying to prevent what appears to have already happened.

This isn’t Google’s first rodeo with defense AI, and the company knows exactly how explosive this territory is. Back in 2018, the tech giant faced a full-scale employee revolt over Project Maven, a Pentagon initiative that used Google’s machine learning to analyze drone footage. Thousands of workers signed petitions, dozens resigned in protest, and the backlash got so intense that Google publicly committed to developing AI principles that would guide its work – including a pledge not to develop AI for weapons or surveillance that violates international norms.

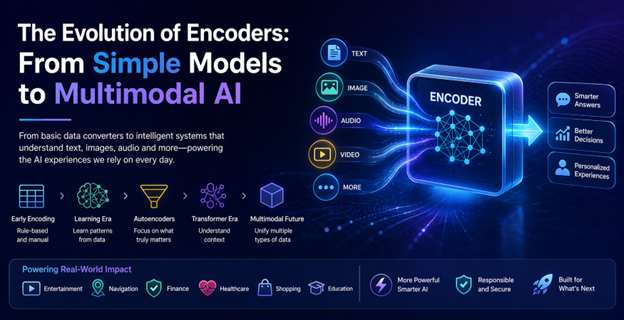

But the AI arms race has fundamentally changed the calculus. OpenAI inked its own classified Pentagon deal earlier this year, as The Verge previously reported. Elon Musk’s xAI followed suit with a defense contract that sparked controversy when details about its “Grok” system surfaced, according to reporting from The Verge. Even Anthropic was in discussions with the Pentagon until the company drew a hard line and got blacklisted for refusing to remove certain safety guardrails, per The Verge’s coverage.

Google’s decision to join this group marks a dramatic reversal from its post-Maven stance. The “any lawful government purpose” language is particularly significant because it doesn’t limit the Pentagon to specific use cases. Unlike narrower contracts focused on cybersecurity or administrative tasks, this broad mandate could encompass everything from intelligence analysis to targeting systems, as long as they fall within legal bounds.

The classified nature of the agreement means the public won’t know exactly how the Pentagon plans to use Google’s AI models – likely including its flagship Gemini system and related technologies. That opacity is precisely what has employees on edge. Without transparency into deployment scenarios, there’s no way for workers or the public to verify whether the technology is being used in ways that align with Google’s stated AI principles.

The timing also reveals a stark power imbalance. Employee activism worked in 2018 when tech talent was scarce and companies competed fiercely to retain top AI researchers. But in 2026, with economic pressures mounting and defense contracts representing lucrative revenue streams, corporate priorities have clearly shifted. Google’s decision to sign the deal just one day after receiving employee objections sends an unmistakable message about whose concerns carry weight.

This Pentagon partnership also has competitive implications beyond defense. Government contracts often serve as proving grounds for enterprise AI capabilities, and landing a DoD deal provides powerful validation for Google Cloud’s AI infrastructure. It puts Google in direct competition with Microsoft, which has deep Pentagon ties through its Azure cloud services and controversial JEDI contract battles.

The broader tech industry is watching closely because Google’s move could set a new standard for AI ethics in practice versus principle. If one of the industry’s most prominent AI ethics advocates can pivot to unrestricted Pentagon contracts, it raises questions about whether corporate AI principles have any real teeth when national security and revenue collide.

Google’s classified Pentagon deal represents a watershed moment for AI ethics in Silicon Valley. The company that once stood down from defense work under employee pressure is now all-in with language so permissive it rivals its competitors’ most expansive government contracts. Whether this reflects pragmatic adaptation to an AI arms race or an abandonment of principles depends on your perspective, but one thing is clear: the power dynamics between tech workers and corporate leadership have fundamentally shifted since 2018. As AI becomes critical infrastructure for national security, companies face impossible choices between ethical commitments, competitive positioning, and geopolitical realities. Google just made its choice, and the rest of the industry is taking notes.

Leave a Reply