Canva is scrambling to contain fallout after its new AI-powered Magic Layers feature was caught automatically replacing the word “Palestine” with “Ukraine” in user designs. The incident, first spotted by X user @ros_ie9, reveals troubling bias in a tool that’s supposed to simply separate image layers – not edit political content. The Australian design giant has apologized and claims the issue is fixed, but the episode raises fresh questions about what happens when AI systems inject their own editorial decisions into creative work.

Canva just handed the design community a masterclass in how not to deploy AI features. The platform’s newly launched Magic Layers tool – part of its broader AI 2.0 update – has been caught red-handed replacing the word “Palestine” with “Ukraine” in user artwork, sparking immediate backlash across social media and forcing the company into damage control mode.

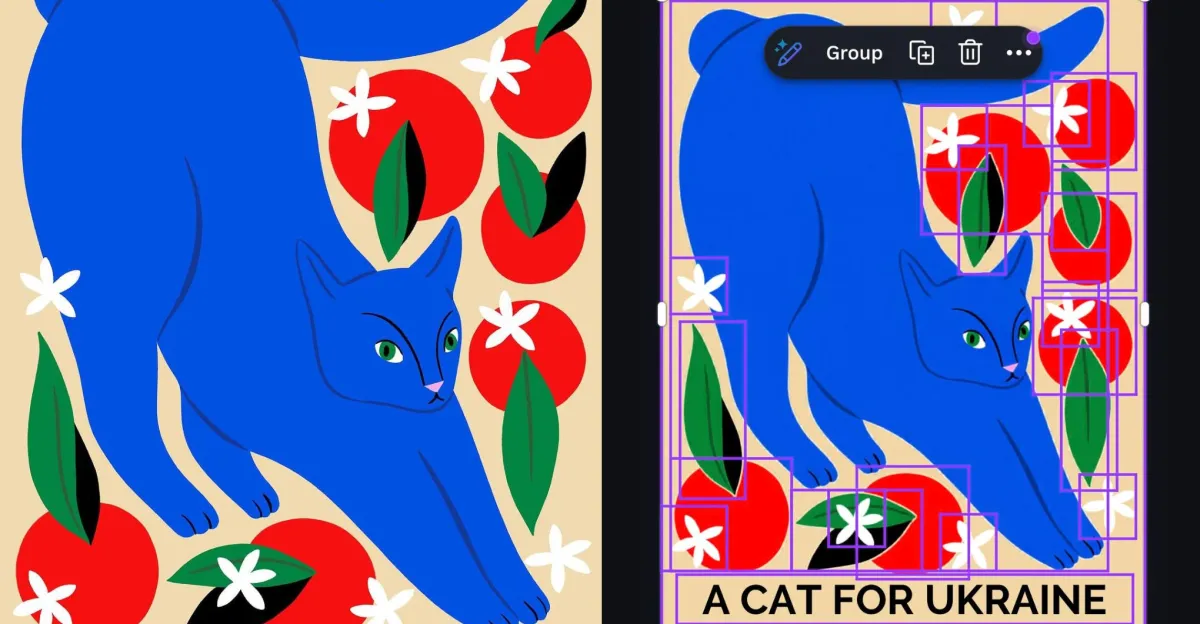

The discovery came from X user @ros_ie9, who posted before-and-after screenshots showing their design featuring the phrase “cats for Palestine” mysteriously transformed into “cats for Ukraine” after running it through Magic Layers. The feature is supposed to intelligently separate flat images into individual editable components – think removing a background or isolating text layers. It’s not supposed to rewrite your content.

What makes this particularly damning is the specificity of the censorship. According to the original post, Magic Layers left other geopolitically sensitive terms like “Gaza” completely untouched. This wasn’t some overzealous optical character recognition gone haywire – it suggests deliberate filtering targeting a specific word. For a design platform used by millions to create everything from social media graphics to activist materials, that’s a PR disaster waiting to happen.

Canva moved quickly to address the firestorm, issuing a statement to The Verge acknowledging “an issue” with Magic Layers. The company claims it’s now resolved and promised to implement safeguards preventing similar incidents. But the vague language raises more questions than it answers – was this a bug in the underlying AI model, an overzealous content moderation filter, or something else entirely?

The timing couldn’t be worse for Canva. The Sydney-based company has been aggressively pushing into AI-powered features as it competes with Adobe and other design incumbents. Magic Layers represents a significant technical achievement – using computer vision and machine learning to automate tedious design tasks. But if users can’t trust the AI to leave their creative intent intact, the whole value proposition collapses.

This incident fits into a broader pattern of AI systems exhibiting unexpected political biases. From chatbots refusing to generate content about certain topics to image generators blocking specific keywords, companies are clearly implementing content policies that users don’t fully understand. The difference here is that Canva users weren’t asking the AI to generate new content – they were trying to edit their own existing designs.

For designers, artists, and activists who rely on Canva to communicate about sensitive geopolitical issues, this breach of trust cuts deep. If the platform can silently alter “Palestine” today, what other terms might be on the hidden blocklist? The lack of transparency around these filtering mechanisms creates a chilling effect where creators have to second-guess whether their tools will faithfully execute their vision.

The technical explanation matters less than the broader implications. Whether this was caused by training data bias, explicit filtering rules, or some combination, Canva shipped an AI feature that made editorial decisions without user consent. That’s fundamentally at odds with what a creative tool should do.

Industry observers are already drawing parallels to other recent AI moderation controversies. Meta’s Instagram has faced criticism for allegedly suppressing pro-Palestinian content, while various AI platforms have been accused of political bias in their outputs. As AI gets embedded deeper into creative workflows, these edge cases become routine frustrations.

Canva‘s rapid response suggests the company understands the severity. But simply fixing this specific instance doesn’t address the underlying question – how many other invisible guardrails are baked into their AI systems? For a platform built on empowering everyday creators, that opacity is particularly problematic.

The incident also highlights the challenges of deploying AI at scale. Canva serves over 190 million users across nearly every country. Building content moderation and safety systems that work fairly across that global user base, with all its conflicting political contexts, is genuinely difficult. But difficulty doesn’t excuse shipping features that appear to take sides in geopolitical disputes.

What happens next will determine whether this becomes a footnote or a turning point. Will Canva publish a detailed post-mortem explaining exactly what went wrong? Will they commit to transparency around their AI filtering policies? Or will this fade into the background as users cautiously return to the platform, now aware that their creative tools might have opinions of their own?

This wasn’t just a technical glitch – it’s a wake-up call about the invisible editorial power we’re handing to AI systems. As design tools get smarter, the line between helpful automation and unwanted censorship gets blurrier. Canva may have patched this specific problem, but the bigger question lingers: when you ask AI to help edit your work, whose values is it really serving? For the millions of creators who’ve made Canva their go-to platform, that uncertainty is now part of every project. The company’s next move isn’t just about fixing code – it’s about rebuilding trust with a community that just learned their creative tools might be making political decisions behind their backs.

Leave a Reply